Wemap AR

Introduction

Wemap AR is an option of Wemap technology allowing users to take advantage of content and services associated with a Wemap map, in an augmented reality experience.

As an illustration, the Wemap AR augmented reality experience can be used for such use cases as :

- Visual search to find products (store or mall) or points of interest (city) around you;

- Visual search to identify your environment: identification of visual anchors distant during a journey by train, plane, car;

- Step-by-step navigation in a heads-up display, without the need for an interactive map.

Wemap AR is structured around three components, two of which are client side, one server side:

- AR view (client side): where interface elements and content are displayed and where interactions happen;

- Positioning engine (client side): which at all times, depending on the available signals, maps and sensors of the phone, recalculates the position and the device orientation;

- Wemap engine (server side): which allows to define the application parameters (content, interface, display, etc.).

The documentation successively addresses these three components, their operation, the technical choices they are based on and the parameters they support.

Wemap Pro users who have subscribed to the Wemap AR option, with or without navigation. For any question about this option contact us at pro@getwemap.com or via the online chat

Summary

- Activation of augmented reality (Wemap AR) and authorizations

- Environments

- AR view

- Positioning engine

- Wemap engine

- Integrations

1. AR activation and authorizations

Wemap AR is an option that can be used for any livemap in the Wemap environment.

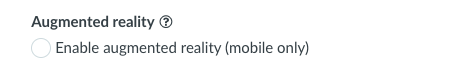

1.1. Activate augmented reality in your application or on your website

To activate the Wemap AR option on a Wemap map go to Wemap Pro

In Wemap Pro, after selecting the map, all you have to do is click on the "Display of points" then check the box "Activate augmented reality (mobile only)", see below. (see Wemap Pro doc)

1.2. Authorization management

The management of authorization requests is integrated into the Wemap SDKs.

To work augmented reality must access the camera (to display the view in reality increased), location and inertial sensors (to position and orient the smartphone). It will then be necessary for the user to authorize the Wemap application to access these resources when permissions are requested.

In some cases, additional signals can be used - without requiring the authorization:

- Bluetooth (indoor location if the building is equipped with an infrastructure);

- SLAM.

1.3. Restrictions

Only devices that have the following sensors are able to use augmented reality: camera, gps, accelerometer, gyroscope, magnetometer, that is to say a large majority of smartphones in 2021.

In the absence of one of these sensors Wemap AR will not activate and the application will remain in "map" mode.

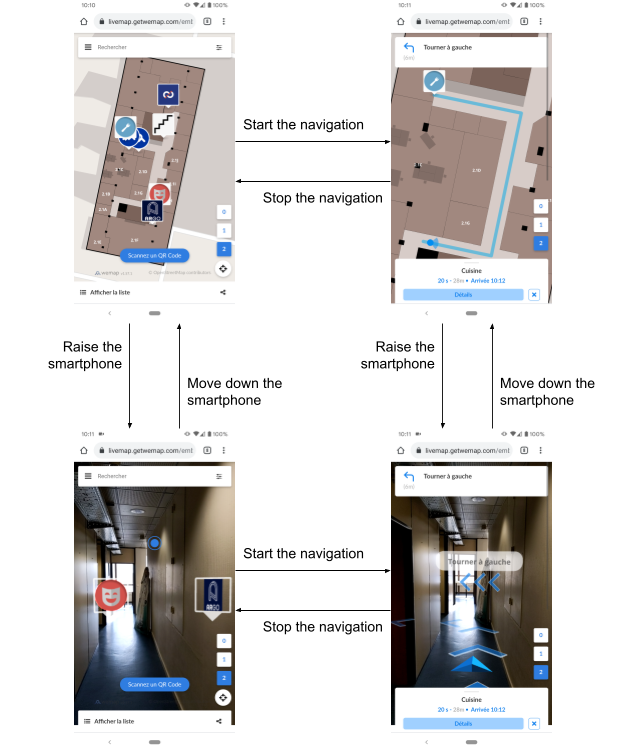

1.4. User activation of augmented reality

Wemap AR being activated in your application or on your website, two options are possible for the user to trigger the augmented reality view (AR view):

- Parameter 1: automatic, the switch to AR view is triggered when the smartphone is vertical, by motion detection;

- Parameter 2: button, the AR view is triggered when the user has clicked on the augmented reality button AND the smartphone is vertical.

AR Button

Userflow

Userflow

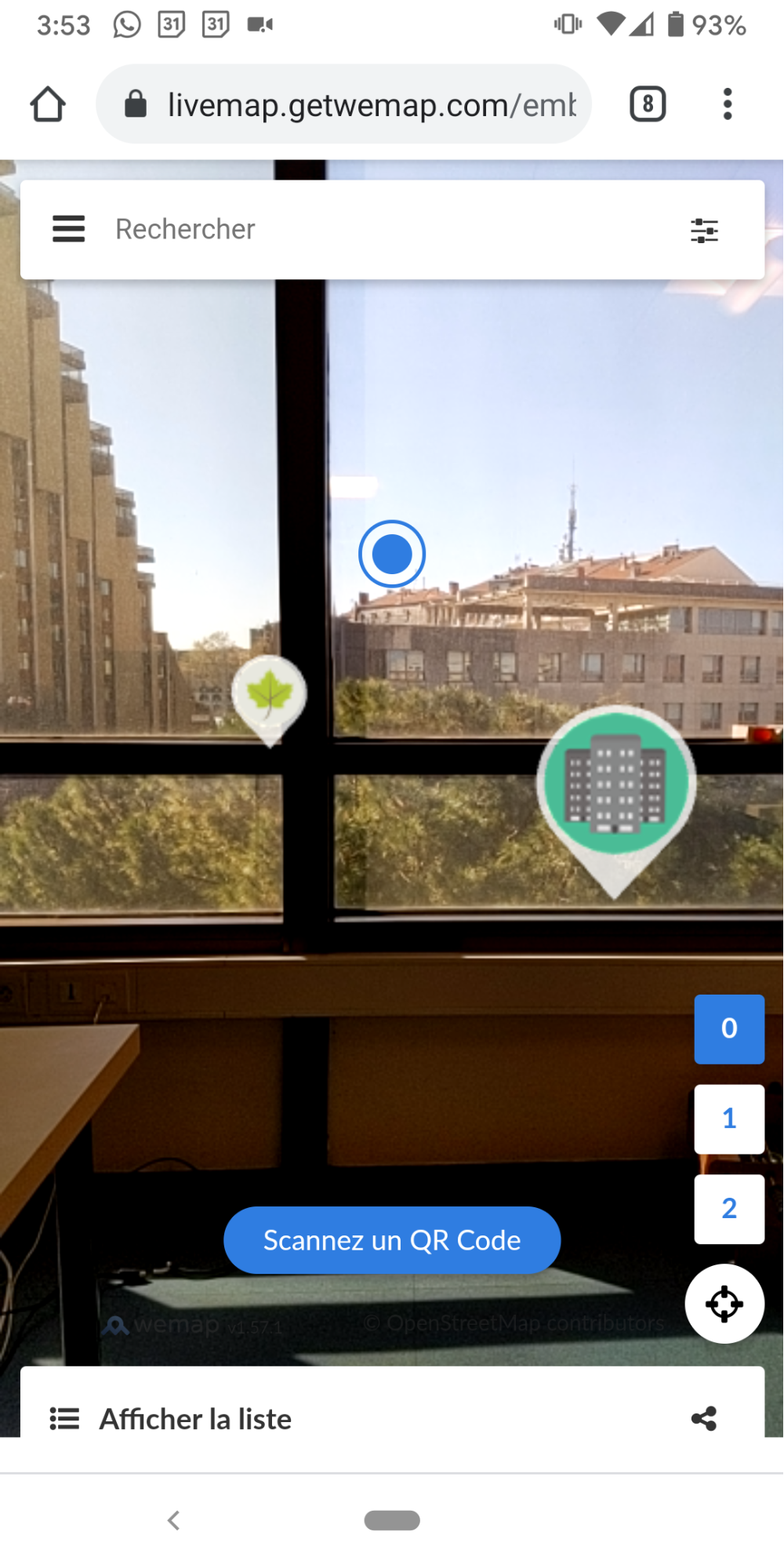

2. Environments

Wemap AR works outdoors but also inside buildings. This is an application setting.

An option to switch from one to the other is available on Wemap Pro (coming soon). This option allows to control in a homogeneous way all the interface parameters and user experience (UI / UX) depending on the environment (size of 3d assets, number of visible chevrons, see below).

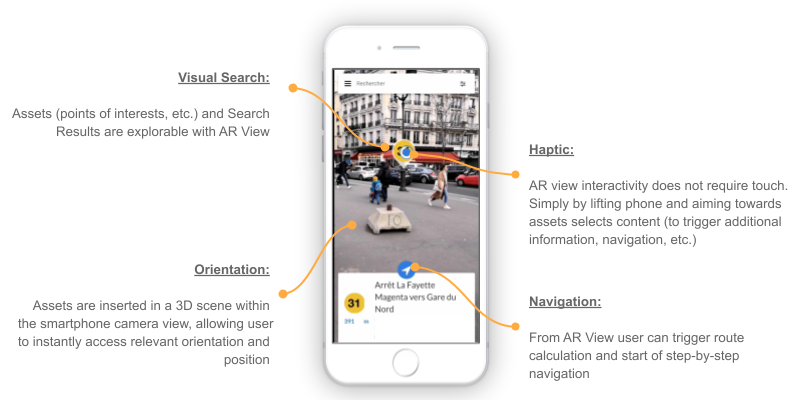

3. AR view

The AR view is a 3D scene oriented in space using the orientation and position of the smartphone. Content can be either two-dimensional and "attached" to the phone screen, or positioned in space, in the 3D scene.The scene includes:

- points of interest type content, positioned in space (in the 3D scene)

- navigation instructions and indications ("route" status), some positioned in space and other in two dimensions;

- UI elements (example: locate button, back to map button, search, etc.).

In a simplified way, the scene includes three states corresponding to the main Wemap AR use cases:

- Visual search

- Content opened

- Navigation.

3.1. Visual search

(i) The content displayed in the 3D scene is the pinpoints which:

- Are in the Field of view (directed by the inertial unit) of the smartphone;

- Are in the Filter by distance: Distance (ar radius) [parameter can be modified in Wemap Pro].

By default, in order not to saturate the screen with visual elements, Wemap AR displays only the points which are within a radius of 1000 meters around the user (this parameter is adjustable in Wemap Pro).

In addition, via integration or on request, an editorial filter can be set up so that only part of the content on the map is displayed in AR view (see filters and tags operation). Contact us to find out more.

(ii) Representation of assets in the 3D scene:

- Internal parameters of the pinpoint (icon, image thumbnail)

- Pinpoint scale parameter in the 3D scene

To facilitate visual search, Wemap AR applies a formula that reduces the size of assets displayed according to their distance and the ar-radius parameter. The formula is linear and ensures that a point at the end of the viewing radius will be twice smaller than a point 10 meters away.

To adjust the formula, contact us.

- Other types of assets: possibility of inserting other types of assets (buildings, objects3D): contact us

(iii) Interaction: selector by viewfinder with a duration of "focusing" fixed at 1 second (contact us to make it a parameter)

3.2. Opened content

Information contained in pinpoints.

3.3. Navigation

Three types of objects (assets) in the virtual scene:

- rafters placed on the ground, which represent the route to be followed;

- instructions when the user needs to change direction (turn left, climb the stairs…), in three dimensions;

- a directional arrow which indicates the position of the next instruction according to the orientation of the smartphone, in three dimensions but always placed on the position of user / smartphone.

The navigation instructions are based on our routing server and step-by-step instructions that use the Open Source Routing Model data model. This calculator works with the following types of routes: outdoor, indoor, indoor to outdoor, outdoor to indoor and indoor to outdoor to indoor.

In order to add the route network of your space (building, park, etc.) to feed into our routing engine: contact us.

Wemap Pro users who have subscribed to the Wemap AR and Wemap Navigation options. For any question about this option contact us at pro@getwemap.com or via the online chat

4. Positioning engine

Wemap positioning engine uses the fusion of data from multiple sensors including: accelerometer, gyroscope, magnetometer, GNSS, Wifi, Bluetooth, camera and mapping (see blog article).

Indoors, in the absence of GNSS signals, it is necessary to implement a third-party absolute positioning solution. This can be:

- Bluetooth Beacons

- Wireless

- QR - code (contact us)

- Vision (contact us, see blog article)

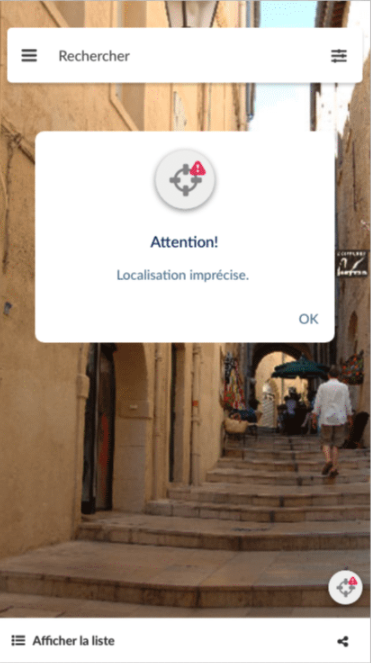

If the position and orientation reported by the positioning engine are not accurate enough, an alert pop-in is displayed to the user (image below). The message appears if the position accuracy is lower than 25 meters outdoors and 8 meters indoors.

To adjust this behavior contact us.

5. Wemap engine

The Wemap engine allows you to define the content that can be explored from the Wemap AR application. To do this, refer to the "livemap" documentation

6. Integrations

Augmented reality is available on all platforms:

- iframe (web)

- SDK (web, iOS, Android, React Native)

- App (iOS, Android)

All you have to do is to check the box for activation on Wemap Pro.